TL;DR

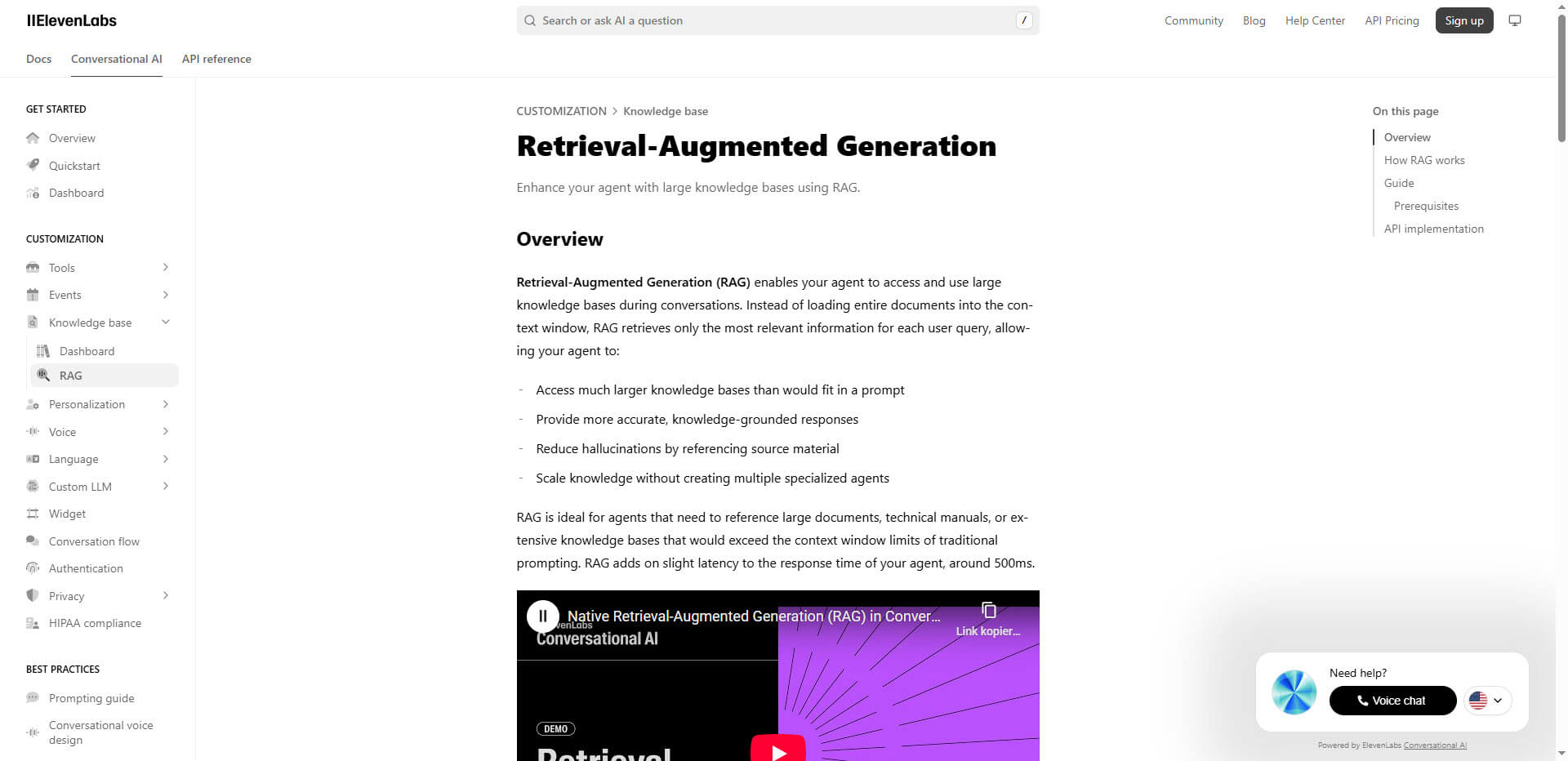

RAG (Retrieval-Augmented Generation) connects LLMs to your own data, reducing hallucinations and enabling AI to answer questions about private documents.

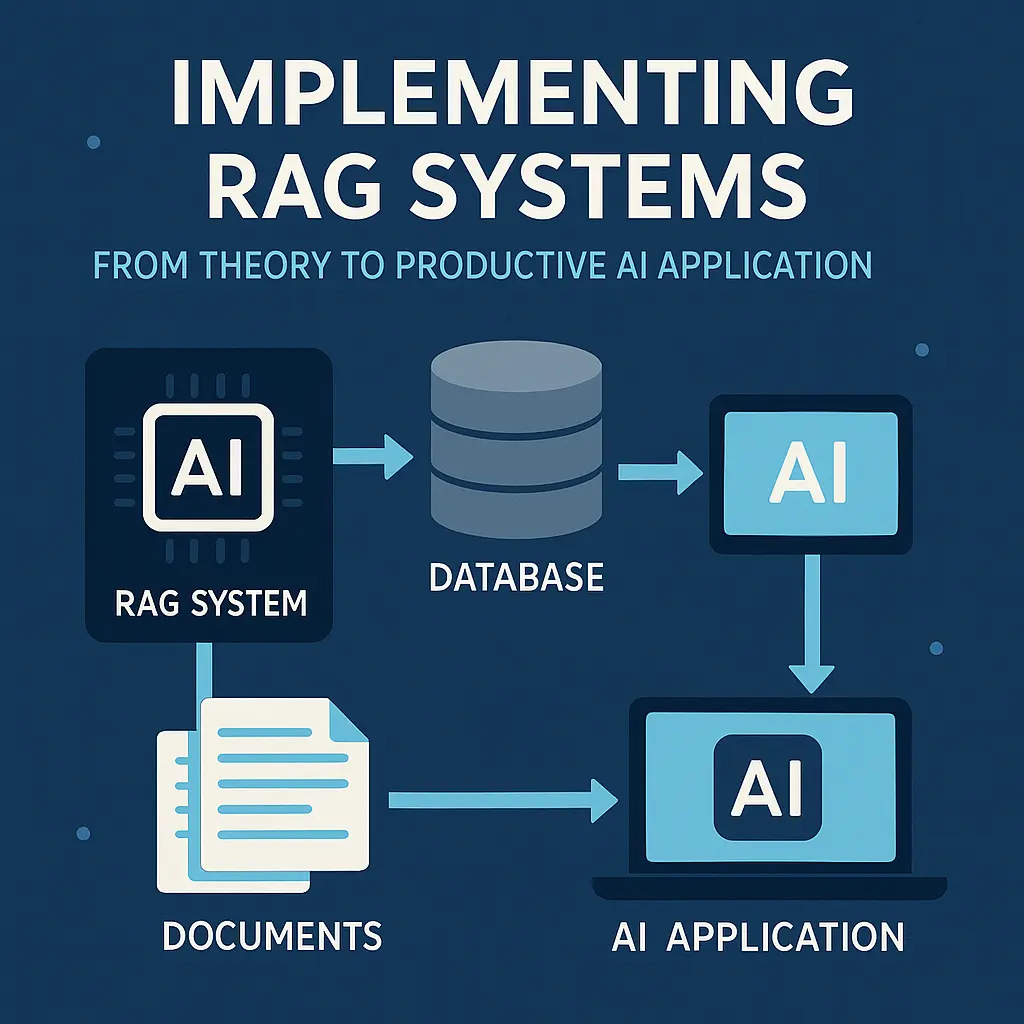

- Core concept: Retrieve relevant chunks from a vector database → feed them to the LLM as context

- Key components: Embedding model + Vector DB (Pinecone, Weaviate) + LLM (GPT-4, Claude)

- Best for: Company knowledge bases, customer support, document Q&A, legal/medical research

- Typical stack: LangChain or LlamaIndex + OpenAI Embeddings + Pinecone

Bottom Line: RAG is the most practical way to make AI useful with your own data. Start with a simple prototype, then optimize retrieval quality.

You don’t just want to understand RAG systems, you want to implement them ready for production? This guide will take you from data strategy to live operation in 30 days – with concrete costs, proven tool decisions and a real case study from the SME sector.