The most important information in brief

- IronClaw offers a secure, local AI infrastructure based on Rust and expands on the OpenClaw concept.

- WASM sandboxing and strict credential injection at the boundary ensure enterprise-grade security.

- The source code is now available as an open-source project for developers on GitHub.

📖 This article is part of our complete AI Agents guide. Read the full guide →

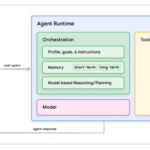

The development of autonomous agents requires not only intelligence, but above all control and security. With IronClaw, NearAI now presents a robust further development of the popular OpenClaw. The IronClaw AI agent is based entirely on Rust and promises to close the typical security gaps of dynamic LLM interactions through a strict “defense-in-depth” concept.

Read also: Claude Sonnet 4.6: The massive coding & agent update

The innovations in detail

The focus of development is on a Rust-based architecture that uses native performance and memory safety as an indispensable foundation. Unlike pure Python frameworks, which often reach their limits under high load, Rust enables efficient parallel processing and type safety. IronClaw implements PostgreSQL in combination with pgvector as long-term memory, ensuring scalable and persistent vector search.

Technically, IronClaw stands out thanks to three key security mechanisms:

- WASM sandbox: External tools and code written by the agent itself are not executed on the host system, but in an isolated WebAssembly environment. This prevents faulty or malicious code from gaining access to the file system.

- Credential injection at the boundary: Sensitive API keys are never loaded directly into the context of the LLM. Injection only occurs at the point of execution, minimizing the risk of leaks due to hallucinations.

- Self-expanding capabilities: IronClaw is designed to dynamically create the functions it needs. However, thanks to the sandbox, this self-expansion takes place within strictly defined guidelines.

Why this is important

Previous local agent frameworks often suffered from a critical dilemma: they were either flexible but insecure (e.g., through direct shell access) or secure but severely limited in their capabilities. The IronClaw AI Agent addresses precisely this “security vacuum” in current agent development.

By isolating critical processes via WASM, the agent transforms from a theoretical security risk into a potentially usable tool for professional environments.

The migration to Rust also signals a maturing process in the industry. It is no longer just about rapid prototyping with Python scripts, but about robust, memory-safe systems. For developers, this means that an agent can be run “unattended” (headless) without fear that a successful “prompt injection” attack will allow the agent to format the entire operating system or exfiltrate passwords.

Availability & Conclusion

IronClaw is now available as an open-source project on GitHub. It is primarily aimed at developers and system architects who want to build their own agent-based products and need to maintain control over the data.

Conclusion: Those who have previously avoided autonomous local agents due to security concerns will finally find the necessary technological safety net in IronClaw – provided they are willing to embrace the Rust stack.

IronClaw vs Other AI Agent Frameworks

\n

How does IronClaw stack up against the most popular AI agent frameworks available today? The table below compares the key dimensions that matter most for teams evaluating agentic tooling in 2025 and beyond.

\n\n

| Feature | IronClaw | AutoGPT | Claude Code | CrewAI |

|---|---|---|---|---|

| Primary Language | Rust | Python | TypeScript / Python | Python |

| Data Privacy | 100% local — no data leaves the machine | Cloud (OpenAI API by default) | Cloud (Anthropic API) | Cloud by default; local LLM support possible |

| Open-Source | Yes (MIT / Apache 2.0) | Yes (MIT) | Closed source | Yes (MIT) |

| Supported LLMs | Local models via Ollama, llama.cpp, and compatible backends | GPT-4 / GPT-3.5 (OpenAI); limited local support | Claude 3.x family | OpenAI, Groq, local via LiteLLM |

| Key Strength | Maximum privacy, Rust performance, enterprise compliance | Large community, plugin ecosystem | Deep code understanding, Anthropic safety focus | Multi-agent role orchestration |

| Internet Required | No — fully air-gap capable | Yes (API calls) | Yes (API calls) | Yes (unless configured for local LLMs) |

| Deployment Environment | Local machine, on-premise servers, air-gapped networks | Local machine (with cloud LLM calls) | Local CLI (with cloud inference) | Local or cloud |

\n

\n\n

The core differentiator is clear: IronClaw is the only framework in this comparison that operates without a single outbound API call. For use cases where data confidentiality is non-negotiable — think legal document processing, healthcare records, or financial modeling — that distinction is not a minor feature. It is the entire value proposition.

\n\n\n

Why IronClaw Matters: Privacy, Performance, and Compliance

\n

The rise of agentic AI has created a fundamental tension: the most capable AI systems are cloud-hosted, but the most sensitive workloads cannot go to the cloud. IronClaw resolves that tension by bringing the agent runtime to the data, rather than the other way around.

\n\n

Data Privacy: Your Data Stays on Your Machine

\n

Every prompt you send to a cloud-based AI agent passes through a third-party server. Even with encryption in transit, you are trusting the provider’s data handling, retention policies, and security posture. For individuals, this is a personal preference. For businesses handling customer data, it can be a legal liability.

\n

IronClaw eliminates this risk entirely. Because the LLM runs locally — whether via Ollama, llama.cpp, or another local inference backend — and because the agent orchestration layer is executed on your own hardware, no information is ever transmitted off-device. This makes IronClaw one of the very few AI agent frameworks suitable for genuinely air-gapped environments.

\n\n

Rust Performance: Memory Safety Without the Overhead

\n

Most AI tooling is written in Python. Python is excellent for rapid prototyping and has an unmatched library ecosystem, but it comes with real costs: a garbage collector that introduces latency spikes, significant memory overhead per process, and a global interpreter lock that complicates true parallelism.

\n

Rust sidesteps all of these issues. Its ownership model enforces memory safety at compile time — there is no garbage collector and no risk of dangling pointers or data races. For an agent framework that may be orchestrating dozens of concurrent tool calls or processing large documents in a loop, Rust’s performance profile is genuinely superior. IronClaw inherits these properties by default, meaning production deployments are faster, leaner, and more predictable than Python-based equivalents.

\n\n

Enterprise Compliance: Built for EU AI Act and GDPR

\n

Regulatory pressure on AI systems is accelerating. The EU AI Act, which began phased enforcement in 2024, places explicit requirements on transparency, data minimization, and human oversight for AI systems used in high-risk contexts. GDPR, already in force, restricts the transfer of personal data to third-party processors without explicit legal basis and adequate safeguards.

\n

IronClaw’s local-first architecture makes compliance significantly more straightforward. When no data leaves the organization’s infrastructure, data transfer impact assessments are simplified, processor agreements with cloud vendors are unnecessary, and audit trails remain entirely within the organization’s control. For legal, financial, and healthcare teams deploying AI agents in the EU, this is not a minor convenience — it is a compliance prerequisite that most cloud-dependent frameworks simply cannot satisfy.

\n\n\n

\n

\”We are entering a phase where the question is no longer whether your organization uses AI agents — it is whether those agents operate inside or outside your security perimeter. For any company handling sensitive data, local AI agents like IronClaw represent the only viable path to meaningful automation without regulatory and reputational exposure. The local-first model is not a compromise on capability; with today’s open-weight models, it is increasingly a competitive architecture in its own right.\”

\n

\n

\n\n\n

How to Install IronClaw: Step-by-Step Guide

\n

Getting IronClaw running is a straightforward process if you are comfortable with a terminal. The entire setup takes under 15 minutes on most modern machines. Here is how to do it.

\n\n

Step 1: Install Rust

\n

IronClaw is written in Rust, so you need the Rust toolchain installed before anything else. The official and recommended method is via rustup, the Rust toolchain installer:

\n

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh\n

Follow the on-screen prompts and choose the default installation. Once complete, restart your terminal or run source \"$HOME/.cargo/env\" to make the cargo and rustc commands available. Verify the installation with rustc --version.

\n\n

Step 2: Clone the IronClaw Repository

\n

With Rust installed, clone the IronClaw repository from GitHub to your local machine:

\n

git clone https://github.com/ironclaw-ai/ironclaw.git\ncd ironclaw\n

If you do not have Git installed, you can also download the source as a ZIP archive directly from the GitHub repository page. Once inside the project directory, build the release binary with Cargo:

\n

cargo build --release\n

This step compiles the entire project. On first run it will download dependencies, which may take a few minutes. Subsequent builds are significantly faster thanks to Cargo’s incremental compilation.

\n\n

Step 3: Configure Your Local LLM Backend

\n

IronClaw needs to connect to a locally running LLM inference server. The most common setup uses Ollama, which lets you pull and serve open-weight models like Llama 3, Mistral, or Phi-3 with a single command:

\n

# Install Ollama, then pull a model\nollama pull llama3\n

Next, open IronClaw’s configuration file (typically config.toml in the project root) and point it at your local inference endpoint. For an Ollama server running on default settings, this looks like:

\n

[llm]\nendpoint = \"http://localhost:11434\"\nmodel = \"llama3\"\n

Adjust the model value to match whichever model you pulled. You can also configure tool permissions, memory settings, and task timeout limits in the same file.

\n\n

Step 4: Run Your First IronClaw Agent

\n

With the backend configured and a model running, you are ready to launch IronClaw. From the project directory, execute the compiled binary:

\n

./target/release/ironclaw --goal \"Summarize all .txt files in ~/Documents and save the output to summary.md\"\n

IronClaw will parse your goal, decompose it into subtasks, invoke the local LLM for reasoning steps, and execute the required file operations — all entirely on your machine. From here, you can explore more complex multi-step goals, integrate custom tools, or connect IronClaw to other local services in your stack.

\n\n\n

Frequently Asked Questions About IronClaw

\n\n

What is IronClaw?

\n

IronClaw is an open-source AI agent framework written in Rust that runs entirely on your local machine. It allows developers and enterprises to build and run autonomous AI agents that automate complex tasks using locally hosted large language models, without sending any data to external servers or cloud APIs. Unlike most AI agent frameworks that depend on cloud-based LLMs, IronClaw is designed from the ground up for local, private, and air-gap-compatible deployment. You can learn more about how it fits into the broader landscape on our AI agents overview page.

\n

\n\n

Is IronClaw free?

\n

Yes, IronClaw is completely free to use. The project is released under an open-source license (MIT or Apache 2.0 — check the repository for the current license terms), which means you can download, use, modify, and distribute it without paying any licensing fees. There is no paid tier, no usage-based billing, and no subscription required. The only costs involved are your own hardware for running the local LLM inference backend — which you would need regardless of the agent framework you choose.

\n

\n\n

Does IronClaw need an internet connection?

\n

No. Once installed and configured with a local LLM backend such as Ollama or llama.cpp, IronClaw operates entirely offline. It does not make any outbound network calls during normal agent execution. This makes it one of the very few AI agent frameworks that can be deployed in fully air-gapped environments — such as secure corporate networks, government infrastructure, or any setting where outbound internet access is restricted or prohibited. An internet connection is only needed during the initial setup phase to download the Rust toolchain, clone the repository, and pull your chosen LLM model files.

\n

\n

\n\n\n